Important Information

You can view this page as a webpage or access this as a regular github repository.

See the top directory of this repository for instructions to set up the NAG Library for Java.

First-order active-set method (FOAS)

[ e04kff |

e04kfc |

handle_solve_bounds_foas ]

Implementations of first-order methods not only are ubiquitous and have a widespread use, they have also demonstrated to endure the challenges of ever-growing problems sizes imposed by the industry. Most notable are applications in statistics, e.g. parameter calibration for log-linear models, conditional random fields (L2-regularisation) or logistic multi-class regression, amongs many other. First-order methods and the Conjugate Gradient method inparticular have been a research subject for well over 50 years and continue to be improved.

FOAS is a first-order nonlinear conjugate method for large-scale bound-constrained nonlinear optimization. The solver is ideal for very large problems (tens of thousands or more variables) where the first-order derivatives are available or are relatively cheap to estimate.

e04kf is also part of the NAG Optimization Modelling Suite common handle interface. It offers clarity and consistency of the interface of the solvers within the suite, making it trivial to switch among compatible solvers.

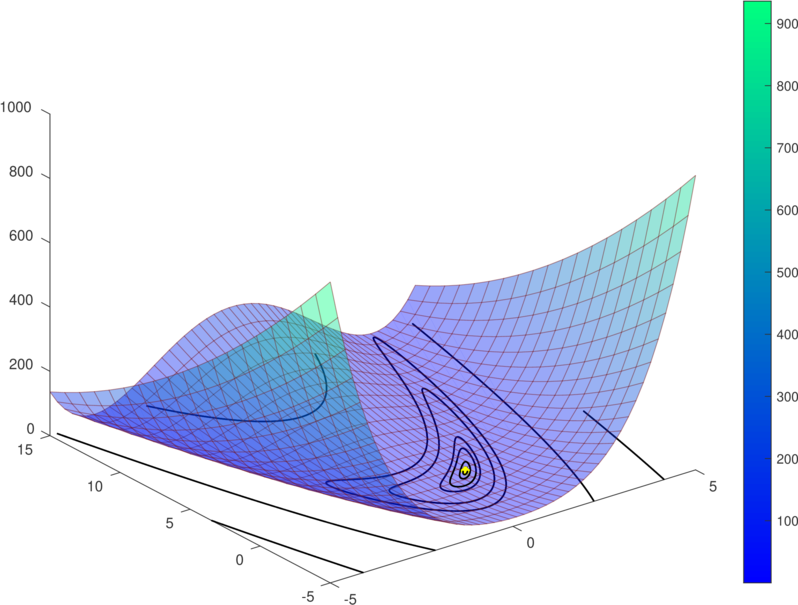

The following example illustrates the simple usage of FOAS to solve the bound-constrained 2D version of the Rosenbrock function which is a classical test function to measure and profile performance of solvers. Source of this example is avaible in Rosenbrock2d.java. It is also adviced to read this page that explains the example.

Figure 1. 2D Rosenbrock function, (left) the minimum is shown as a yellow dot at x=(1,1). On the right a bound constrained version showing with a purple dotted line a path towards the constrained solution point on the border.

More information

- FOAS information page

- FOAS in the NAG Library

- FOAS documentation page [C]

- Examples [ Java example | C example | Fortran example | Python example ]

A modern replacement for NAG solver uncon_conjgrd_comp (e04dg)

One of the main design objectives for handle_solve_bounds_foas (e04kf) was to provide a modern and attractive replacement for the CG solver e04dg introduced in Mark 12. While this solver was targeted for unconstrained NLPs, e04kf has been extended with an active-set method in order to solve bound-constrained NLPs.

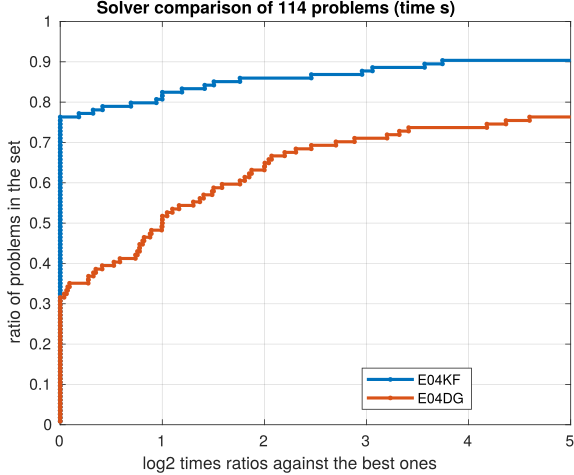

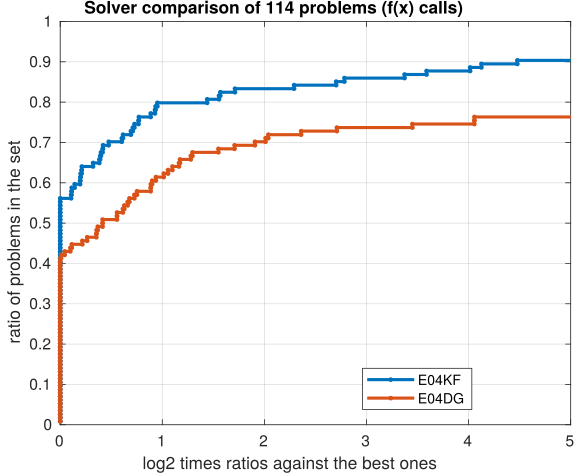

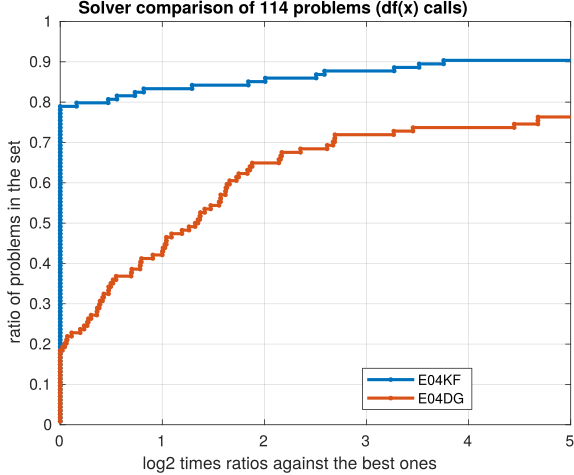

More recent and modern methods have been incorporated into e04kf making it much faster than e04dg. The following Figure 2 reports performance profiles over 114 unconstrained NLP CUTEst problems for both solvers e04kf and e04dg. Contrasting the three plots, it is evident that the new solver is more efficient in time (40% faster) and in general terms is less expensive: requires less function and gradient evaluations.

Figure 2. Performance profiles comparing solvers e04kf and e04dg. In the time plot on the left, higher line indicates faster solver. For the center and right plots higher line represent less functions (NF) or gradients (NG) calls. For all three plots it can be seen that e04kf is 40% faster in time and requires less function and gradient calls.

Migrating from Marks 25 and 26 to Mark 27

Notes and comments on migrating your code from uncon_conjgrd_comp (e04dg) to the new FOAS solver handle_solve_bounds_foas, (e04kff,

e04kfc):

Beale’s function

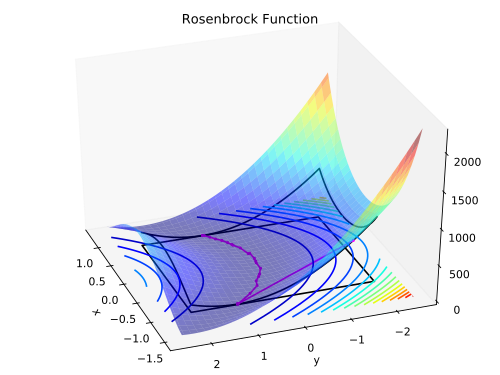

This example compares the steps taken by FOAS and L-BFGS-B 3.0 to find the solution point to Beale’s function. It is a classic nonconvex test function used to benchmark nonlinear optimization solvers.

Both solvers are used to find a minimum to the function and are started at the same initial point (2, 2). The following figure shows an animation of the steps taken by each solver to find a minimum to the function. It illustrates the agressive steps taken by the Conjugate Gradient method compared to the more conservative steps of BFGS.

Figure 3. Contour plots for Beale’s function (thin blue lines), and the steps taken by FOAS (red) and L-BFGS-B 3.0 (blue) to find the minimum of Beale’s funtion at (3, 0.5) marked with a magenta star. It can be seen that FOAS by the 8th step provides a reasonable approximation to the solution point while L-BFGS-B is still relatively far from it.

References

- Hager W W and Zhang H (2005) A New Conjugate Gradient Method with Guaranteed Descent and an Efficient Line Search. SIAM J. Optim. 16(1) 170–192

- Hager W W and Zhang H (2006a) Algorithm 851: CG DESCENT, a Conjugate Gradient Method with Guaranteed Descent. ACM Trans. Math. Software 32(1) 113–137

- Hager W W and Zhang H (2006b) A New Active Set Algorithm for Box Constrained Optimization. SIAM J. Optim. 17(2) 525–557

- Hager W W and Zhang H (2013) The Limited Memory Conjugate Gradient Method. SIAM J. Optim. 23(4) 2150–2168

- Nocedal J and Wright S J (2006) Numerical Optimization. (2nd Edition) Springer Series in Operations Research, Springer, New York